February 26, 2023

Top tokenization methods you should know with python examples

February 26, 2023

Top tokenization methods you should know with python examples

February 26, 2023

Tokenization means breaking down text into smaller units, such as words, phrases, or sentences.

Tokenization is an important concept in Natural Language Processing (NLP) that involves breaking down the text into smaller, more manageable units called tokens. This process is necessary because computers understand and process text in a very different way than humans do. Breaking down the text into individual tokens allows machines to analyze and interpret text more effectively. Many different models can be used for tokenization, including rule-based, statistical, and deep-learning models. Rule-based models use pre-defined rules to identify tokens, while statistical models use probabilities to determine the most likely tokens. Deep learning models use neural networks to learn patterns in text and identify tokens based on those patterns.

Some popular tokenization methods include:

We still have many possibilities with some tokenizer like ****TweetTokenizer (****as the name suggest, more accurate with tweets-based texts) and MWETokenizer (The multi-word expression tokenizer, which is specifically designed to handle multi-word expressions, which are fixed phrases or idiomatic expressions that consist of two or more words)

The simplest way to use the word_tokenize function is to pass a string of text to it, and it will automatically split the text into individual words or tokens.

import nltk

from nltk.tokenize import (TreebankWordTokenizer,

word_tokenize,

wordpunct_tokenize,

TweetTokenizer,

MWETokenizer)

sentence = "Ulife is the best AI service provider for innovative, modern, and customer-first companies,"

print(f'Output = {word_tokenize(sentence)}')

Output:

Output = ['Ulife', 'is', 'the', 'best', 'AI', 'service', 'provider', 'for', 'innovative', ',', 'modern', ',', 'and', 'customer-first', 'companies', ',']In that case, we must use wordpunct_tokenize ****function instead of word_tokenize

print(f'Output = {wordpunct_tokenize(sentence)}')Output:

Output = ['Ulife', 'is', 'the', 'best', 'AI', 'service', 'provider', 'for', 'innovative', ',', 'modern', ',', 'and', 'customer', '-', 'first', 'companies', ',']Here we must instantiate the classes we have imported previously:

# MWE

tokenizer = MWETokenizer()

tokenizer.add_mwe(('Martha', 'Jones'))

print(f'MWE tokenization = {tokenizer.tokenize(word_tokenize(sentence))}')

# TWeet

tokenizer = TweetTokenizer()

print(f'Tweet-rules based tokenization = {tokenizer.tokenize(sentence)}')

# TreebankWordT

tokenizer = TreebankWordTokenizer()

print(f'Default/Treebank tokenization = {tokenizer.tokenize(sentence)}')

MWE tokenization = ['Ulife', 'is', 'the', 'best', 'AI', 'service', 'provider', 'for', 'innovative', ',', 'modern', ',', 'and', 'customer-first', 'companies', ',']

Tweet-rules based tokenization = ['Ulife', 'is', 'the', 'best', 'AI', 'service', 'provider', 'for', 'innovative,', 'modern,', 'and', 'customer-first', 'companies', ',']

Default/Treebank tokenization = ['Ulife', 'is', 'the', 'best', 'AI', 'service', 'provider', 'for', 'innovative', ',', 'modern', ',', 'and', 'customer-first', 'companies', ',']

word_tokenize can also be used to tokenize a paragraph of text into individual sentences.

from nltk.tokenize import sent_tokenize, word_tokenize

text = "This is the first sentence. This is the second sentence. And this is the third sentence."

sentences = sent_tokenize(text)

for sentence in sentences:

words = word_tokenize(sentence)

print(words)

Output:

['This', 'is', 'the', 'first', 'sentence', '.']

['This', 'is', 'the', 'second', 'sentence', '.']

['And', 'this', 'is', 'the', 'third', 'sentence', '.']

word_tokenize can also be used to tokenize non-English text. However, in this case, it is necessary to specify the language to ensure proper tokenization.

from nltk.tokenize import word_tokenize

text = "Das ist ein Beispieltext auf Deutsch."

tokens = word_tokenize(text, language='german')

print(tokens)

Output:

['Das', 'ist', 'ein', 'Beispieltext', 'auf', 'Deutsch', '.']spaCy is a popular open-source natural language processing library that provides many tools for processing and analyzing text data. One of the main components of spaCy is its tokenizer, which is responsible for breaking down a piece of text into individual tokens.

The spaCy tokenizer is based on the rule-based approach, where a set of rules is defined to determine how to split the input text into individual tokens. The tokenizer in spaCy is designed to work with various languages and handles a wide range of cases, including special characters, punctuation, and contractions.

One of the advantages of the spaCy tokenizer is its ability to handle complex cases, such as URLs, email addresses, and phone numbers. It also provides several built-in token attributes, such as lemma, part of speech, and named entities, which can be useful for downstream tasks like text classification and information extraction.

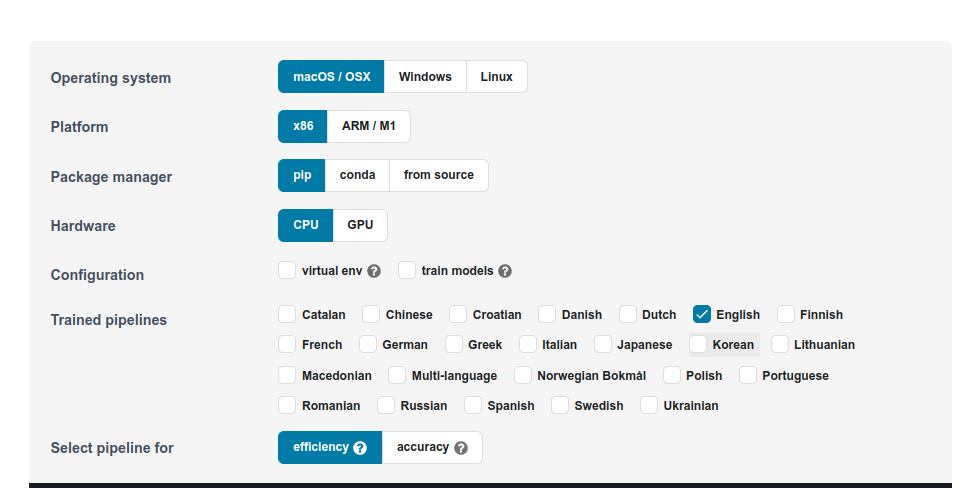

To use it you must first install and download the models:

$ pip install spacy

$ python3 -m spacy download en_core_web_sm

Visit https://spacy.io/usage to choose the language and configuration that fit your needs.

import spacy

nlp = spacy.load("en_core_web_sm")

doc = nlp("This is an example sentence.")

# Iterate over the tokens in the document

for token in doc:

print(token.text)

Output:

This

is

an

example

sentence

.Keras is a popular open-source deep-learning library that provides a wide range of tools for building and training neural networks. It also includes several pre-processing functions, including text tokenization.

In Keras, the text tokenization function is provided by the Tokenizer class in the preprocessing.text module. The Tokenizer class provides a simple and efficient way to tokenize text data, with several options for customizing the tokenization process.

To use the Tokenizer class, you first need to create an instance of the class and fit it to your text data. This step builds the vocabulary of the tokenizer and assigns a unique integer index to each token in the vocabulary. Here is an example:

from keras.preprocessing.text import Tokenizer

# Define some example text data

text_data = ["This is the first sentence.", "This is the second sentence."]

# Create a tokenizer and fit it to the text data

tokenizer = Tokenizer()

tokenizer.fit_on_texts(text_data)

# Print the vocabulary of the tokenizer

print(tokenizer.word_index)

Output:

{'this': 1, 'is': 2, 'the': 3, 'first': 4, 'sentence': 5, 'second': 6}

So, that is. I hope you found this article interesting and that it helps you implement AI into your product. Turn out it is exactly what we do at Ulife.ai. We help companies change their perspective with AI. We handle data collection, model evaluation, and product development. You can contact us here for more information.